c8124555-d172-48c9-bdc2-b8399e44d6eb

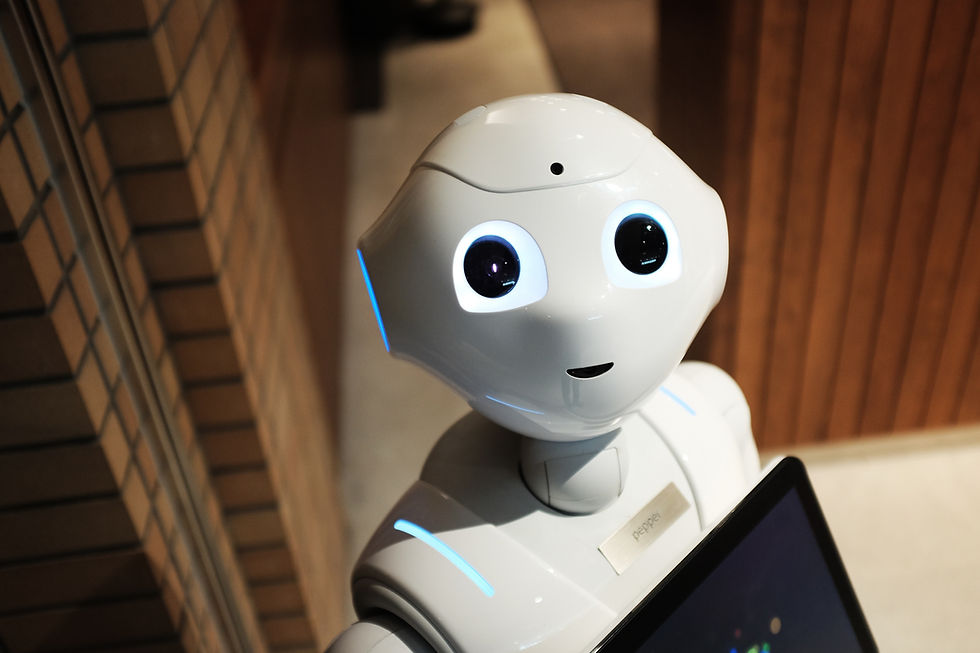

Replika – an AI chatbot

You must be signed in to download materials.

About

Virtual digital assistants like Alexa and Siri comb the web for information to answer questions you ask. What is the weather today? What are the top news headlines? How much is 364 times 325? But these virtual assistants cannot go much further than this. For example, if you say “Siri, I’m having a bad day and I could use your help”, it might respond “I’m here if you want to talk.” If you push that further, the response is typically something along the lines of “I don’t get it.” What if this digital voice did get it? And could it be a virtual friend? What would this mean for data privacy and ethics related to mental health?

Author(s)

Elizabeth Luckman

Clinical Assistant Professor of Business Administration and RC Evans Data Analytics Scholar